I’m excited to have guest writer Chelsea Lamb from Business Pop share this post! In boardrooms and basement startups alike, artificial intelligence has graduated from buzzword to backbone. The question is no longer if AI has a place in your business, but where, how, and with what expectations. There’s plenty of noise in this space — from... Continue Reading →

Best practices for working with data in Microsoft PowerApps

The diverse collection of data connectors in PowerApps is impressive. There are over 250 different connectors available, not only from the Microsoft ecosystem but across the entire internet. You can connect to Salesforce, Gmail, Zendesk, Azure and so much more. These connections are great, but I have found that data connectors bring one of the... Continue Reading →

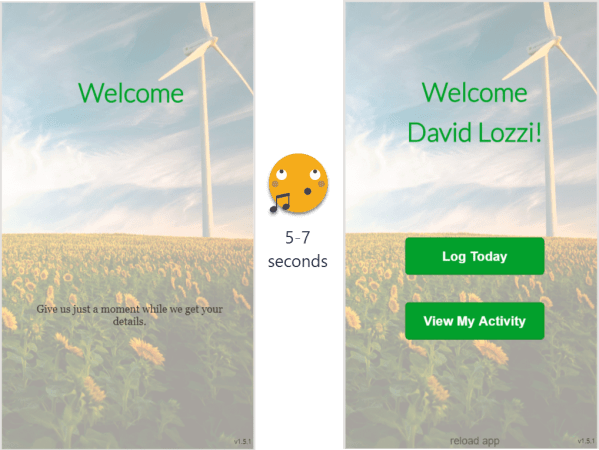

Tips to entertain your users while PowerApps gets their data, i.e. loading screens

Depending on where your data lives, it may take a few seconds for that data to display in the PowerApp. There is nothing that drives users more nuts than just staring at a screen as it does nothing, wondering if it's frozen or broken. PowerApps does have that useless little loading dots thing at the... Continue Reading →

Tips and tricks for working with Microsoft Flow

I Love Microsoft Flow I don't know what it is, but I spend a lot of time in it, from small silly Flows to real time savers. I've shared a few of my Flows already, and will be sharing more. I may be a little addicted to automation, Flow being the biggest contender, but also... Continue Reading →

Use Microsoft Forms to collect data right into your Excel file

This post is the first of a few where we look at how easy the Office 365 stack integrates. In this series, we will: Create a Form and have the data save directly in Excel (this post)Add the Form to Microsoft TeamsNotify the Team a submission was madeHow does this look like on the phone I... Continue Reading →

How to work with large lists in Office 365 SharePoint Online

What's a large list? Anything over 5,000 items. Why is this such a low limit? Great question, and Microsoft does a good job explaining it in their help documents: To minimize database contention SQL Server, the back-end database for SharePoint, often uses row-level locking as a strategy to ensure accurate updates without adversely impacting other... Continue Reading →

My Users Don’t Like SharePoint because it is too slow!

This is Part 7 of my series on 'My Users Don't Like SharePoint...' As your SharePoint matures and grows, it can get considerably larger and complex. Content databases can grow to hundreds of gigs, search indexes grow larger, users rely more on Excel services, additional external business data is pulled in, some custom functionality is... Continue Reading →

My Users Don’t Like SharePoint because they can’t find what they’re looking for! Part 2

This is Part 6 of my series on 'My Users Don't Like SharePoint...' Actually, this is Part 2 of Part 5, if that makes sense. This is a continuation of this post. In the previous post, we covered how easy it is to enhance search to assist your users in finding what they really want. Our... Continue Reading →

Use PowerShell to manipulate the values of a SharePoint choice field.

Thanks to Knoots for suggesting the idea for this post, from a comment on Using PowerShell to play with SharePoint Items.Using PowerShell, we're going to walk through handling a Choice field in a list. Specifically, this is a calendar list using the Category field. This may come in handy if you want to automate changing... Continue Reading →

Questions on SharePoint 2010 Databases

What's the maximum size for a SharePoint database? This is a very common question across the board. Microsoft's documentation recommends a maximum of 200GB per content database. This isn't a limitation, just a recommendation. They are recommending this level due to performance and maintenance considerations. When your database gets much larger than that, there are some... Continue Reading →